The Digital Services Act (DSA) has been introduced by the European Union to address the risks posed by online platforms. In particular, the act calls for auditing the risks associated with these platforms, which is crucial to ensure their safe and responsible operation.[1] However, the DSA does not provide clear guidelines on how these audits should be conducted, leaving room for various interpretations. This gap increases the likelihood of overlooking critical insights, such as the intricacies within each individual platform component and the interconnectedness of the platform as a whole. This limited view can result in a flawed understanding of the platform's impact on users and lead to inadequate recommendations for improving its performance. Therefore, it is crucial to establish a comprehensive auditing framework that considers the intricate details of each component and their interdependencies to ensure that the platform is assessed thoroughly and effectively.

In the past, platforms and in particular very large online platforms (VLOPs) were often perceived as monolithic entities with a single algorithm, which was misleading. In reality, platforms like YouTube are made up of multiple components and different algorithms, all of which might have different functional logics. Understanding the differences between these components is crucial for accurate risk assessments. To analyze the risk posed by a platform, auditors need to identify the specific components of the platform that are involved, understand how they and their interplay contribute to the risk, and determine which components have the highest impact. For example, in the case of YouTube, when a person searches for election information, they might start with the platform's search engine and only then surf the video recommendation engine. Therefore, looking at only one of those components in isolation is not enough.

To underscore the significance of dissecting the platform into its individual components, we have taken YouTube as a case study, utilizing the data collected as part of a data donation project by the consortium DataSkop, coordinated by AlgorithmWatch, a non-profit research and advocacy organization, in the Summer of 2021. Our findings highlight the considerable differences in various components of the platform and the varying levels of risks associated with them. By quantifying these differences, the study offers a more nuanced understanding of the platform's ecosystem, including the interconnections and dependencies between its components.

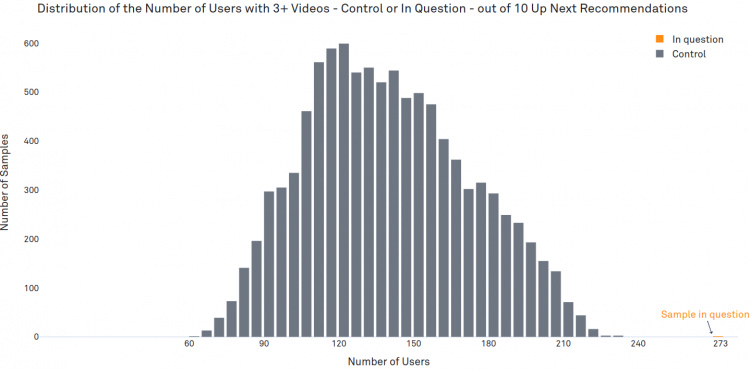

However, the complexity extends beyond this point. While examining the different components is a crucial step in understanding the platform's ecosystem, it is not enough to fully understand the user experience. For example, in 2021 YouTube set a goal to limit views of "borderline" content from recommendations to 0.5% of all views on the platform.[2] Borderline content refers to material that comes close to but does not quite violate the community guidelines. While this may sound like a small number, it is important to understand that this average does not tell us everything. If those views are concentrated among a small group of users, it can still be a significant problem. Therefore, auditors need to go beyond the average metrics and examine the individual components in detail. Thus, the platform can be thought of as a tree with different interconnected components or branches, each of which may further branch out, form clusters, and requires more careful analysis.

In this publication, we aim to contribute to the development of useful auditing processes by analyzing YouTube as a demonstrative case. First, we provide a brief overview of the data at hand. Then, we introduce three YouTube components that are included in our dataset and explore their variations. Next, we examine each component individually in more detail. Finally, the publication concludes with recommendations for implementing a thorough auditing process.

It is important to note that this publication does not aim to provide an extensive definition of risks or exhaustive guidelines for auditing. Rather, we focus on showcasing the complexities of platform analysis and make the case for a more nuanced approach. For a more comprehensive overview of the Digital Services Act (DSA), including its objectives, importance, and related risks, audits, and assessments, we recommend consulting the SNV publication "Auditing Recommender Systems".

For optimal viewing experience, we recommend accessing this publication from a desktop or laptop computer, as the charts and interactive elements may not be correctly displayed on smaller mobile devices.