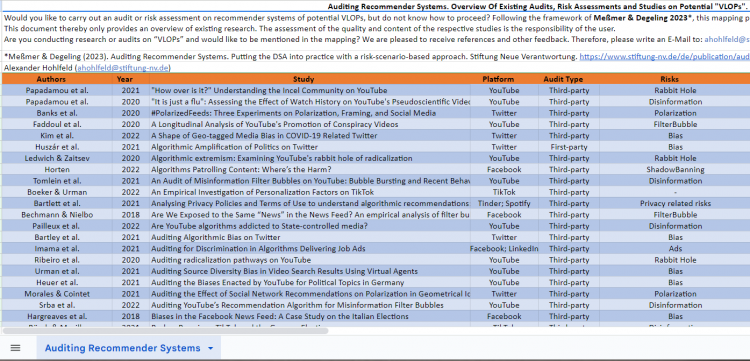

Auditing Recommender Systems. An Overview Of Existing Audits, Risk Assessments and Studies on Potential "VLOPs"

Beitrag

The "Digital Services Act" (DSA) requires "Very Large Online Platforms" (VLOPs) operating in the European Union to conduct different audits and risk assessments. Our paper "Auditing Recommender Systems. Putting the DSA into practice with a risk-scenario-based approach" [1] provides general guidelines on conducting these audits, taking the operationalisation of systemic risks into account.

As mentioned in our paper, there is no clear "one size fits all" approach. Not every method can measure every risk, and each approach has different benefits and limitations. The decision on the technical method depends on the systematic risk that will be audited and the platform elements involved. Auditors and researchers must share their experiences and findings on different audit methods to get results that are as precise as possible.

This mapping intends to support this exchange by providing an overview of existing audits, risk assessments and studies of potential "Very Large Online Platforms". The document thereby only provides an overview of existing research.

In our paper [2] we are following Sandvig et al. 2014 [3] & Ada Lovelace Institute 2014 [4] and distinguish between:

- Code Audits

- Crowdsourced Audits

- Document Audits

- Architecture Audits

-

Automated Audits

- Sock Puppet Audits

- API Audits

- Scraping Audits

- User Surveys

Different choice architecture elements that can be involved are, for example:

- User experience

- User interface

-

Algorithmic Logic

- e.g., Recommendation or Search algorithms

- Content Moderation

- Terms & Conditions

- Advertisement

- Report Mechanisms

- Data-related practices

Are you conducting research or audits on “VLOPs” and would like to be mentioned in the mapping?

Are there audits and studies we should mention? We are pleased to receive references and other feedback. Therefore, please write an e-mail to: ahohlfeld@stiftung-nv.de

[1] Anna-Katharina Meßmer and Martin Degeling, ‘Auditing Recommender Systems’, Stiftung Neue Verantwortung, 23 January 2023

[2] Meßmer and Degeling, ‘Auditing Recommender Systems’, 35–45

[3] Christian Sandvig et al., ‘Auditing Algorithms : Research Methods for Detecting Discrimination on Internet Platforms’, in Data and Discrimination: Converting Critical Concerns into Productive Inquiry, 2014

[4] Ada Lovelace Institute, ‘Technical Methods for Regulatory Inspection of Algorithmic Systems’ (London: Ada Lovelace Institute, 9 December 2021)

Alexander Hohlfeld